Container and Microservices Security Assessment

What is a container?

A container is a standard unit of software that packages up code and all its dependencies so the application runs quickly and reliably from one computing environment to another. A Docker container image is a lightweight, standalone, executable package of software that includes everything needed to run an application: code, runtime, system tools, system libraries and settings.

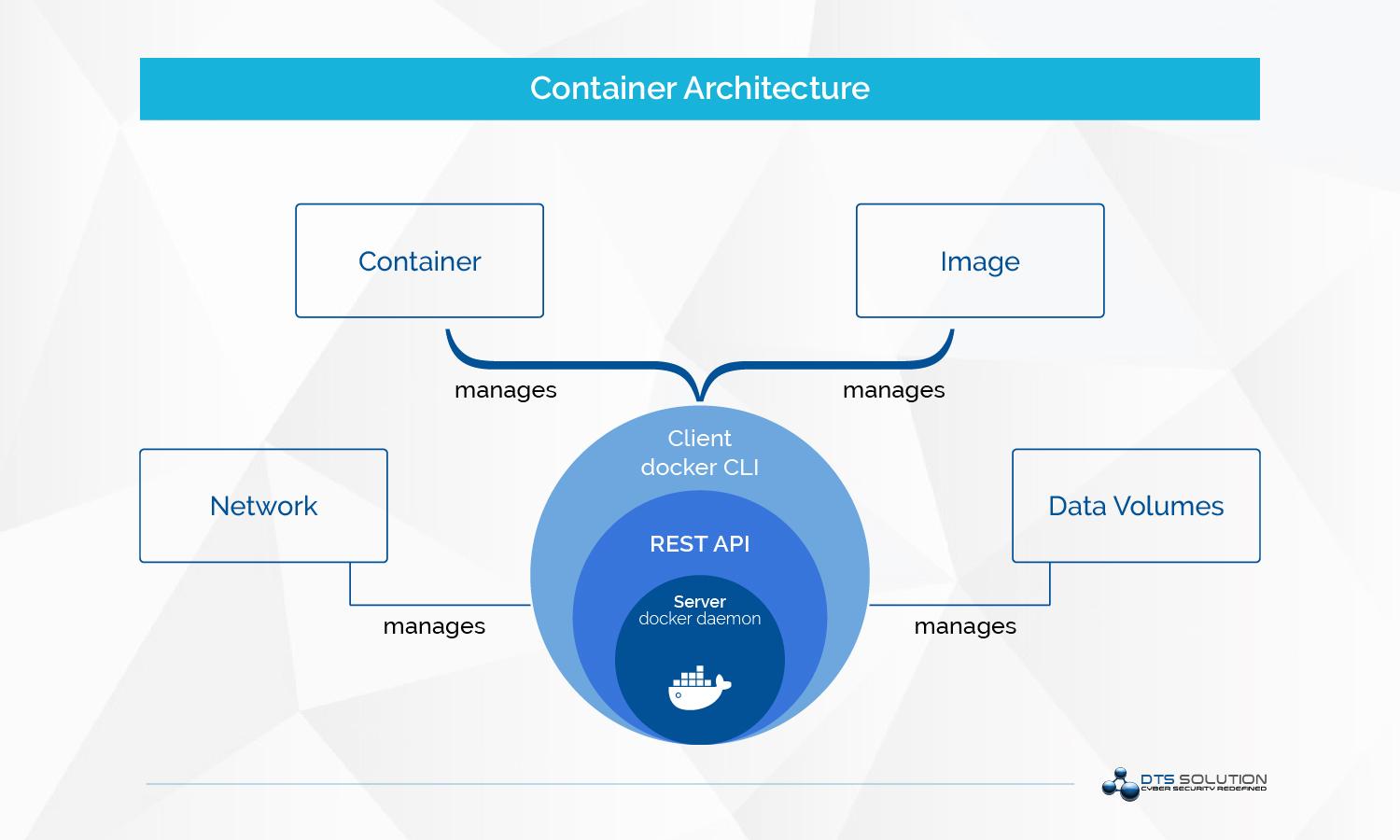

Container architecture

Docker uses a client-server architecture. The Docker client talks to the Docker daemon, which does the heavy lifting of building, running, and distributing your Docker containers. The Docker client and daemon can run on the same system, or you can connect a Docker client to a remote Docker daemon. The Docker client and daemon communicate using a REST API, over UNIX sockets or a network interface.

The Challenges of Container Security

According to NIST SP800-190 Major risks for core components of container technology include but not limited to

- Image Risks

- Image Vulnerabilities

- Image Configuration Defects

- Registry Risks

- Insecure Connections To Registries

- Stale Images In Registries

- Orchestrator Risks

- Unbounded Administrative Access

- Unauthorized Access

- Container Risks

- Runtime Software Vulnerabilities

- Unbounded Network Access From Containers

- Host Os Risks

- Large Attack Surface

- Shared Kernel

Top common container attacks

- Attacking insecure volume mounts

- Attacking container capabilities

- Attacking unauthenticated docker API

Best Practices for container security

- Set resource quotas

- Don’t run as root

- Secure your container registries

- Protect docker API

- Use trusted, secure images

- Identify the source of your code

- API and network security

- Review your container capabilities and revoke unnecessary capabilities

- Define SECCOMP policies for different containers to restrict kernel features

Container and Microservices Security Assessment

What is a container?

A container is a standard unit of software that packages up code and all its dependencies so the application runs quickly and reliably from one computing environment to another. A Docker container image is a lightweight, standalone, executable package of software that includes everything needed to run an application: code, runtime, system tools, system libraries and settings.

Container architecture

Docker uses a client-server architecture. The Docker client talks to the Docker daemon, which does the heavy lifting of building, running, and distributing your Docker containers. The Docker client and daemon can run on the same system, or you can connect a Docker client to a remote Docker daemon. The Docker client and daemon communicate using a REST API, over UNIX sockets or a network interface.

The Challenges of Container Security

According to NIST SP800-190 Major risks for core components of container technology include but not limited to

- Image Risks

- Image Vulnerabilities

- Image Configuration Defects

- Registry Risks

- Insecure Connections To Registries

- Stale Images In Registries

- Orchestrator Risks

- Unbounded Administrative Access

- Unauthorized Access

- Container Risks

- Runtime Software Vulnerabilities

- Unbounded Network Access From Containers

- Host Os Risks

- Large Attack Surface

- Shared Kernel

Top common container attacks

- Attacking insecure volume mounts

- Attacking container capabilities

- Attacking unauthenticated docker API

Best Practices for container security

- Set resource quotas

- Don’t run as root

- Secure your container registries

- Protect docker API

- Use trusted, secure images

- Identify the source of your code

- API and network security

- Review your container capabilities and revoke unnecessary capabilities

- Define SECCOMP policies for different containers to restrict kernel features

Security in a Serverless Environment

Serverless – also known as Function as a Service (FaaS) – is a cloud computing execution model where the cloud provider dynamically manages the allocation and provisioning of servers. A serverless application runs in stateless compute containers that are event-triggered, ephemeral (May last for one invocation), and fully managed by the cloud provider. Pricing is based on the number of executions rather than pre-purchased compute capacity.

When you build your application with AWS Lambda, Azure Functions for Google Cloud Functions, cloud providers take responsibility for securing your project but only partially. Vendors protect databases, operating systems, virtual machines, the network and other cloud components but they are not in charge of the application layer, which includes the code, business logic, and data and cloud services configuration.

Therefore it’s up to the app’s owner to defend these parts against possible cyber attacks.

| Application owner responsibilities | Serverless provider responsibilities |

| Client side | Operating system |

| Data in cloud | Virtual machines |

| Data in transit | Containers |

| Application | Storage |

| Identity and access management | Database |

| Cloud services configuration | Netwroking |

In serverless architectures there’s a huge gap in security knowledge around serverless when compared to traditional applications’. To close or at least narrow this gap, let’s get down to the major challenges that come alongside the benefits of FaaS.

- More permissions to manage

- More point of vulnerability

- More third-party dependencies

- More data in storage and transit

- Complicated authentication

- More wallet targeting attacks

- More APIs to manage

What you can do to minimize your risk

On permissions side

- Review each function and determine what it really needs to do

- Follow rule of least privilege

- As functions deployed they should continuously scanned for suspicious activity

More point of vulnerabilities

- Alongside WAFs, apply perimeter security to each function to protect it against data breaches

- Identify trusted sources and add them to the whitelist. Use whitelist validation when possible

- Continuously monitor updates to your function

- Apply runtime defense solutions to protect your functions during execution.

More third-party dependencies

- Avoid third-party packages with lots of dependencies when possible

- Derive components from reliable official source via secure links

- If you run a Njode.js application, use package locks or NPM shinkwrap to ensure that no updates will penetrate into you code until you review them

- Continuously use automated dependency scanners such as snyk.io or OWASP dependency check to identify and fix vulnerabilities in third-party components.

More data in storage and transit

- Identify at-risk data and reduce its storage to the necessary minimum.

- All of the credentials within your functions that invoke third-party services or cross-account integrations should be temporary or encrypted when possible.

- provide automatic encryption of sensitive data in transit

- Use key management solutions offered by the cloud infrastructure to control cryptographic keys (e.g. AWS Key Management Service or Key Vault).

- Set stricter constraints on allowed input and output messages coming through an API gateway.

- For additional security, send information over HTTPS (Hypertext Transfer Protocol Secure) endpoints only.

Complicated authentication

- rather than build a complex authentication system from scratch, use one of the available access management services (such as Microsoft’s Azure AD or Auth0)

- Keep access privileges within the serverless infrastructure to a minimum by default and increase them manually when needed.

- If you allow users to edit data, perform additional validation for actions that can destroy or modify data

More wallet targeting attacks

- set budget limits and alarms based on your current spending (though this kind of protection may cause a DDoS attack when the hacker reaches the predefined limits)

- Put limits on the number of API requests in a given time window. You can allow a client to make one call per second while blocking additional calls

- use DDOS protection tools (a good example is Cloudflare which offers a suite of security features including WAF and rate limiting)

- if API gateways are internal and used only within other components, make them private and thus unapproachable for attackers.

More APIs to manage

- integrate automated monitoring tools to discover APIs and, all-in-all, bring more visibility to your serverless tech stack (for instance, Epsagon provides auto discovery of APIs and cloud resources that exists in the enterprise environment)

Security in a Serverless Environment

Serverless – also known as Function as a Service (FaaS) – is a cloud computing execution model where the cloud provider dynamically manages the allocation and provisioning of servers. A serverless application runs in stateless compute containers that are event-triggered, ephemeral (May last for one invocation), and fully managed by the cloud provider. Pricing is based on the number of executions rather than pre-purchased compute capacity.

When you build your application with AWS Lambda, Azure Functions for Google Cloud Functions, cloud providers take responsibility for securing your project but only partially. Vendors protect databases, operating systems, virtual machines, the network and other cloud components but they are not in charge of the application layer, which includes the code, business logic, and data and cloud services configuration.

Therefore it’s up to the app’s owner to defend these parts against possible cyber attacks.

| Application owner responsibilities | Serverless provider responsibilities |

| Client side | Operating system |

| Data in cloud | Virtual machines |

| Data in transit | Containers |

| Application | Storage |

| Identity and access management | Database |

| Cloud services configuration | Netwroking |

In serverless architectures there’s a huge gap in security knowledge around serverless when compared to traditional applications’. To close or at least narrow this gap, let’s get down to the major challenges that come alongside the benefits of FaaS.

- More permissions to manage

- More point of vulnerability

- More third-party dependencies

- More data in storage and transit

- Complicated authentication

- More wallet targeting attacks

- More APIs to manage

What you can do to minimize your risk

On permissions side

- Review each function and determine what it really needs to do

- Follow rule of least privilege

- As functions deployed they should continuously scanned for suspicious activity

More point of vulnerabilities

- Alongside WAFs, apply perimeter security to each function to protect it against data breaches

- Identify trusted sources and add them to the whitelist. Use whitelist validation when possible

- Continuously monitor updates to your function

- Apply runtime defense solutions to protect your functions during execution.

More third-party dependencies

- Avoid third-party packages with lots of dependencies when possible

- Derive components from reliable official source via secure links

- If you run a Njode.js application, use package locks or NPM shinkwrap to ensure that no updates will penetrate into you code until you review them

- Continuously use automated dependency scanners such as snyk.io or OWASP dependency check to identify and fix vulnerabilities in third-party components.

More data in storage and transit

- Identify at-risk data and reduce its storage to the necessary minimum.

- All of the credentials within your functions that invoke third-party services or cross-account integrations should be temporary or encrypted when possible.

- provide automatic encryption of sensitive data in transit

- Use key management solutions offered by the cloud infrastructure to control cryptographic keys (e.g. AWS Key Management Service or Key Vault).

- Set stricter constraints on allowed input and output messages coming through an API gateway.

- For additional security, send information over HTTPS (Hypertext Transfer Protocol Secure) endpoints only.

Complicated authentication

- rather than build a complex authentication system from scratch, use one of the available access management services (such as Microsoft’s Azure AD or Auth0)

- Keep access privileges within the serverless infrastructure to a minimum by default and increase them manually when needed.

- If you allow users to edit data, perform additional validation for actions that can destroy or modify data

More wallet targeting attacks

- set budget limits and alarms based on your current spending (though this kind of protection may cause a DDoS attack when the hacker reaches the predefined limits)

- Put limits on the number of API requests in a given time window. You can allow a client to make one call per second while blocking additional calls

- use DDOS protection tools (a good example is Cloudflare which offers a suite of security features including WAF and rate limiting)

- if API gateways are internal and used only within other components, make them private and thus unapproachable for attackers.

More APIs to manage

- integrate automated monitoring tools to discover APIs and, all-in-all, bring more visibility to your serverless tech stack (for instance, Epsagon provides auto discovery of APIs and cloud resources that exists in the enterprise environment)

See also: